We use the following formula to compute population covariance. Background error covariance matrices (B): Describes errors in the background state (forecast from previous analysis). N is the number of scores in each set of data. Which value shows that readings are further away from. Which value tells that data points are more dispersed. Which of these values show if the variance is more or not. For example, the covariance between two random variables X and Y can be calculated using the following formula (for population): For a sample covariance, the formula is slightly adjusted: Where: Xi the values of the X-variable.

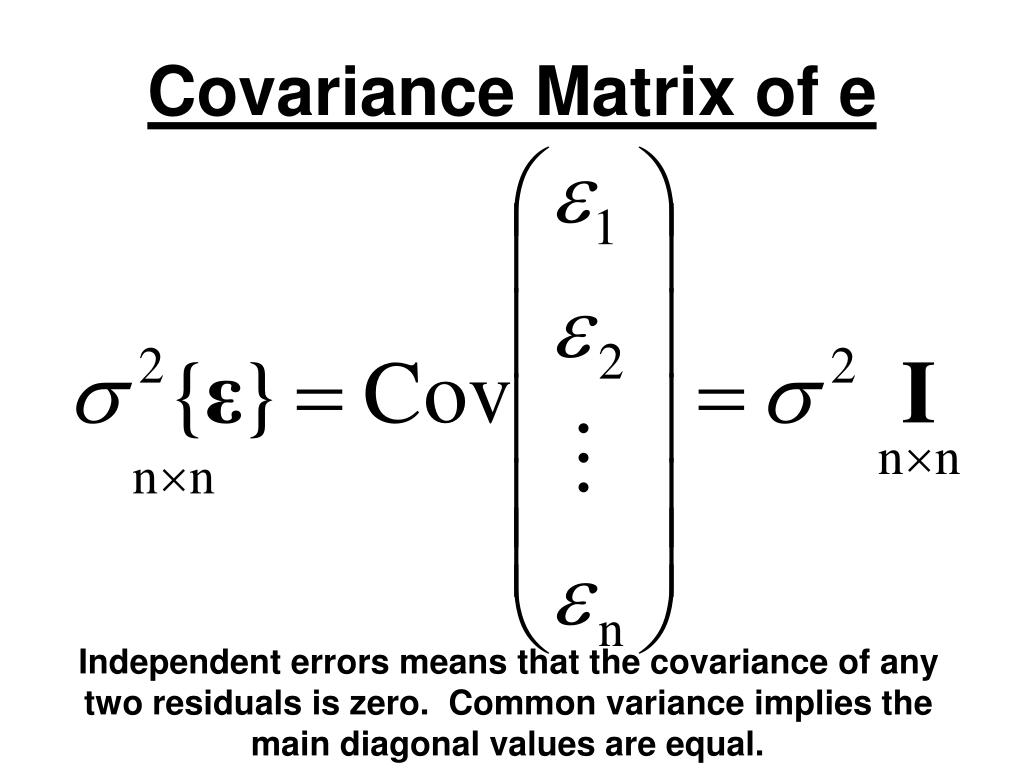

If my covariance matrix A determinant is 100, and the other covariance matrix B determinant is 5. Cov ( X, Y) ( Xi - X ) ( Yi - Y ) / N xiyi / N. Covariance is being used to represent variance for 3d coordinates that I have. We use the following formula to compute population covariance. The matrix is positive semi-definite, since the associated quadratic form is non-negative everywhere. The trace of gives the sum of all the variances. The diagonal elements of give the variances of each vector in the data.

The method has good performance only when the eigenvectors are closed. Covariance is a measure of the extent to which corresponding elements from two sets of ordered data move in the same direction. Covariance is a measure of the extent to which corresponding elements from two sets of ordered data move in the same direction. The sample covariance matrix allows to find the variance along any direction in data space. How do you find eigenvalues and eigenvectors from the covariance matrix You can find both eigenvectors and eigenvalues using NumPY in Python. Definition of mean vector and variance- covariance matrix The mean vector consists of the means of each variable and the variance-covariance matrix consists of the variances of the variables along the main diagonal and the covariances between each pair of variables in the other matrix positions. When afj2 in (2.1) is replaced by (2.5), the n equations are solved for the k unknowns (ai,, akk) by applying least squares criterion. The shrinkage constant converge to zero as the number of observations goes up. covariance matrix X is diagonal, with the jth diagonal element given by k Tj2 Xjr2 arry (2.5) r1 where xj, is the (j, r)th element of X. \usepackage > 1 \) the shrinkage parameter was above 1. This paper presents algorithms for efficiently computing the covariance matrix for features that form sub-windows in a large multi- dimensional image.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed